Abstract

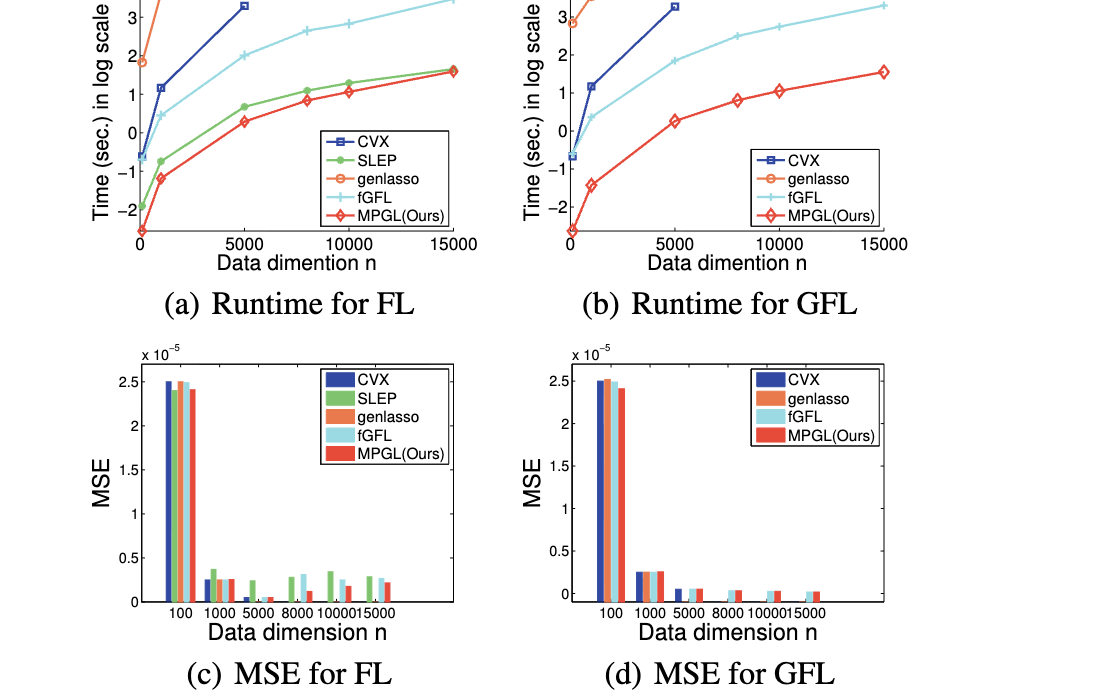

Unlike traditional LASSO enforcing sparsity on the variables, Generalized LASSO (GL) enforces sparsity on a linear transformation of the variables, gaining flexibility and success in many applications. However, many existing GL algorithms do not scale up to high-dimensional problems, and/or only work well for a specific choice of the transformation. We propose an efficient Matching Pursuit Generalized LASSO (MPGL) method, which overcomes these issues, and is guaranteed to converge to a global optimum. We formulate the GL problem as a convex quadratic constrained linear programming (QCLP) problem and tailor-make a cutting plane method. More specifically, our MPGL iteratively activates a subset of nonzero elements of the transformed variables, and solves a subproblem involving only the activated elements thus gaining significant speed-up. Moreover, MPGL is less sensitive to the choice of the trade-off hyper-parameter between data fitting and regularization, and mitigates the long-standing hyper-parameter tuning issue in many existing methods. Experiments demonstrate the superior efficiency and accuracy of the proposed method over the state-of-the-arts in both classification and image processing tasks.

Model

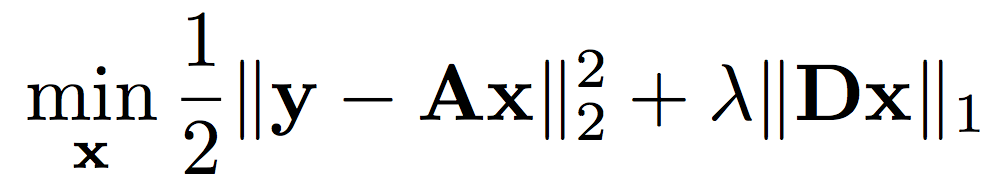

Classical Generalized LASSO Problem

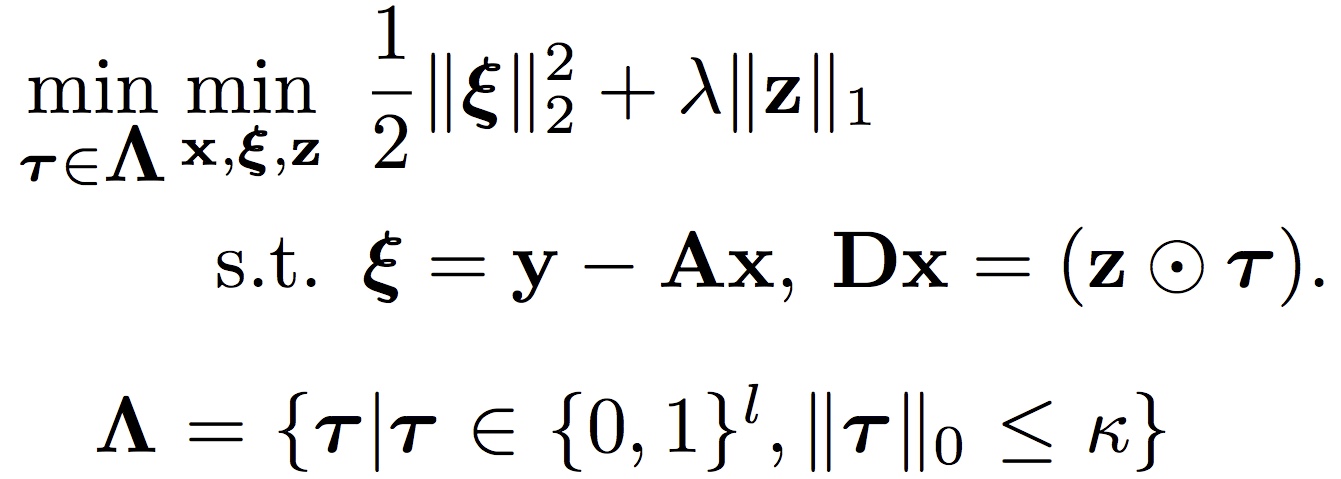

Proposed Formulation with a Binary Indicator for Generalized LASSO

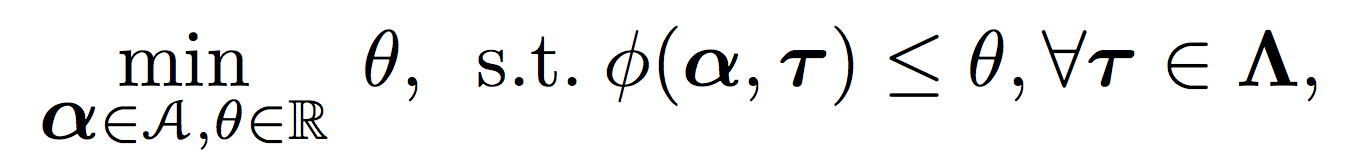

Proposed QCLP formulation for Generalized LASSO

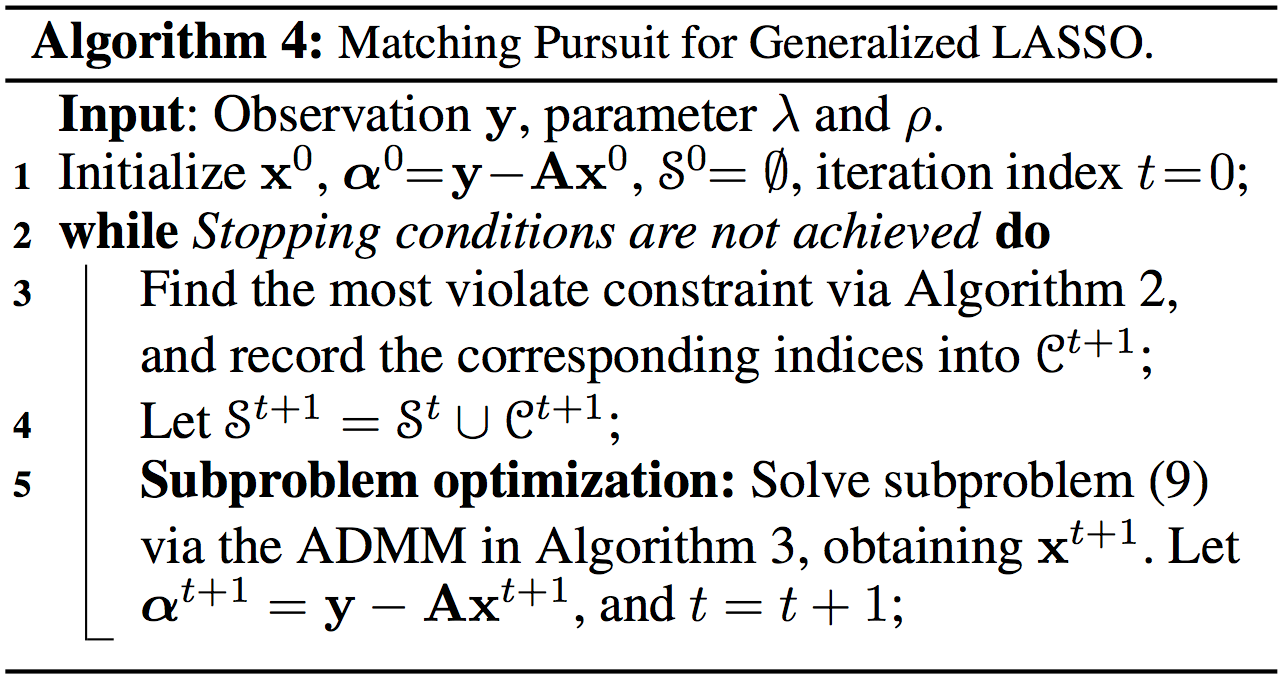

Algorithm

MPGL for Solving the Generalized LASSO Problem in QCLP Formulation

Our Related Work

-

Self-paced Kernel Estimation for Robust Blind Image Deblurring Dong Gong, Mingkui Tan, Yanning Zhang, Anton van den Hengel, Qinfeng Shi In IEEE International Conference on Computer Vision (ICCV), 2017. [Data&Results]

-

MPTV: Matching Pursuit Based Total Variation Minimization for Image Deconvolution Dong Gong, Mingkui Tan, Qinfeng Shi, Anton van den Hengel, Yanning Zhang In IEEE Transactions on Image Processing (TIP), Volume: 28, Issue: 4, 2019. [arXiv]